Designing For Voice: The Power of Prototyping

Introduction

We’ve long used language to communicate with machines. Back in the early days of computing, engineers laboriously and meticulously mapped their ideas to ones and zeros by poking holes into punch cards — slowly and carefully spelling out commands, using the earliest of machine languages. While this wasn’t the friendliest “language,” it was, in fact, a language by textbook definition. We spoke the language of the machines.

Over time, computer scientists developed artificial languages that made it easier for humans to translate human thought into machine language. While not exactly English, some artificial languages come pretty close. Take a look at this example from the Structured Query Language (SQL), a programming language used by administrators for managing data in a database:

SELECT user.full_name FROM users WHERE user.email = ‘bob@example.com'

You don’t need to be a programmer to generally understand what’s happening there. Similarly, how about this AppleScript example, a language used by Mac OS developers to automate tasks on the desktop:

Display dialog "Hello World"

Making the Case for Voice

It sometimes surprises me that we need to “make the case for voice” given that humans have been moving in this direction from the moment they first started “speaking” to machines.

In a nutshell, voice makes sense because it’s:

- Natural - We’re leveraging a skill set that’s familiar to us, one that we’re innately driven to master from our earliest days

- Fast - The average person speaks 150 words per minute, but only types 40 words per minute.

- Accessible - Consider situations where your hands and eyes are unavailable. These situations are good candidates for voice. Driving, showering, cooking, etc.

Why now? Because it’s only recently that we’re seeing advances in technology, availability of hardware and software ecosystems, and growing consumer demand; it’s truly creating a moment that looks very much like a perfect storm — we’re finally at a place in time where voice and conversational applications are a reality.

Designing for Voice is Difficult

Amazing! Machines are finally starting to understand humans — it’s all 🦄 and 🌈 now, right?

Alas, no. Creating voice and natural language user experiences isn’t easy. In fact, the hardest part of the process isn’t the development — it’s designing the user experience. This surprised me – so I decided to look at the data.

Turns out while almost half of Amazon Echo and Google Home owners use their smart speaker devices "regularly" or "all of the time" — the chances that these same individuals are using installed skills is bleak. The average retention rate for an Alexa skill after one week is around 6%. A vast majority of published skills are "zombie apps" that are used only once then discarded. [6]

What’s the problem here? Why are so many people actively using their smart speakers, but quickly abandoning skills after installing them? Simply put, the high abandonment rate is driven by consumers with high expectations but are quick to abandon if the app is difficult to use.

Given the collective lack of industry experience required to develop great conversational applications, it’s fair to say most skills fall into the category of “difficult to use.” Jason Amunwa summed it up best: “Voice UX represents the biggest challenge since the birth of the smartphone.” [7]

From my own experience, I’ve seen the many challenges that Voice UX designers face. These fall into four broad categories:

- Creation - Voice UX designers don’t actively engage potential end-users and key stakeholders early enough in the conversational dialogue creation process. Most often, Voice UX designers create written scripts and circulate scripts for feedback. Typically, little to no feedback is provided by end-users and stakeholders until the voice experience is tried first hand, which is typically during and after the development process. This is too late for most meaningful feedback.

- Testing - Voice UX designers have limited ways to test assumptions with end users (e.g. What will a user say in response to a prompt? Will the bot’s response make sense? Is it dialog too long? etc.) This is partly a process problem, partly a tools problem. There’s little in the way to facilitate meaningful experiences (e.g. via Wizard of Oz Testing) at scale.

- Measurement - Voice UX designers have limited ability to identify and measure areas of friction at scale within the Voice UX; they also have limited access to quantifiable data points to inform decision making. What part of the experience falls short? Where are users tripped up?

- Iteration - Product owners have limited ability to rapidly iterate and make adjustments to conversational dialogues, to test each iteration, and to measure impact of these changes.

Process, Tips & Tools: Prototyping is Powerful

While web and mobile design have established best practices and standards, conversational voice design is new, and its design standards are still being developed. Fortunately, a number of great resources are starting to emerge to help everyone learn best practices regarding process, tips and tools.

Process - When it comes to process, Amazon offers a free Conversational Design Workshop. Voice UX design expert Ben Sauer offers a great 65-minute on-demand seminar called Designing Dialogue: an Intro to VUI Design. Career Foundry also offers a course recommended by Amazon titled Become a UX Designer.

Tips - Voice UX designer Ben Sauer also maintains a blog called Voice Principles that includes a very useful “list of lists” outlining many guiding principles and tips from Amazon, Google, Microsoft, IBM, and others. Head over to that site for a great compendium of voice UX design wisdom.

Tools - From a tooling perspective, Orbita recently added a powerful feature called Orbita Prototype. It addresses the main challenges experienced by Voice UX designers in the four areas described above regarding creation, testing, measurement, and iteration.

Enhancing Efficiency and Accuracy With Orbita Prototype

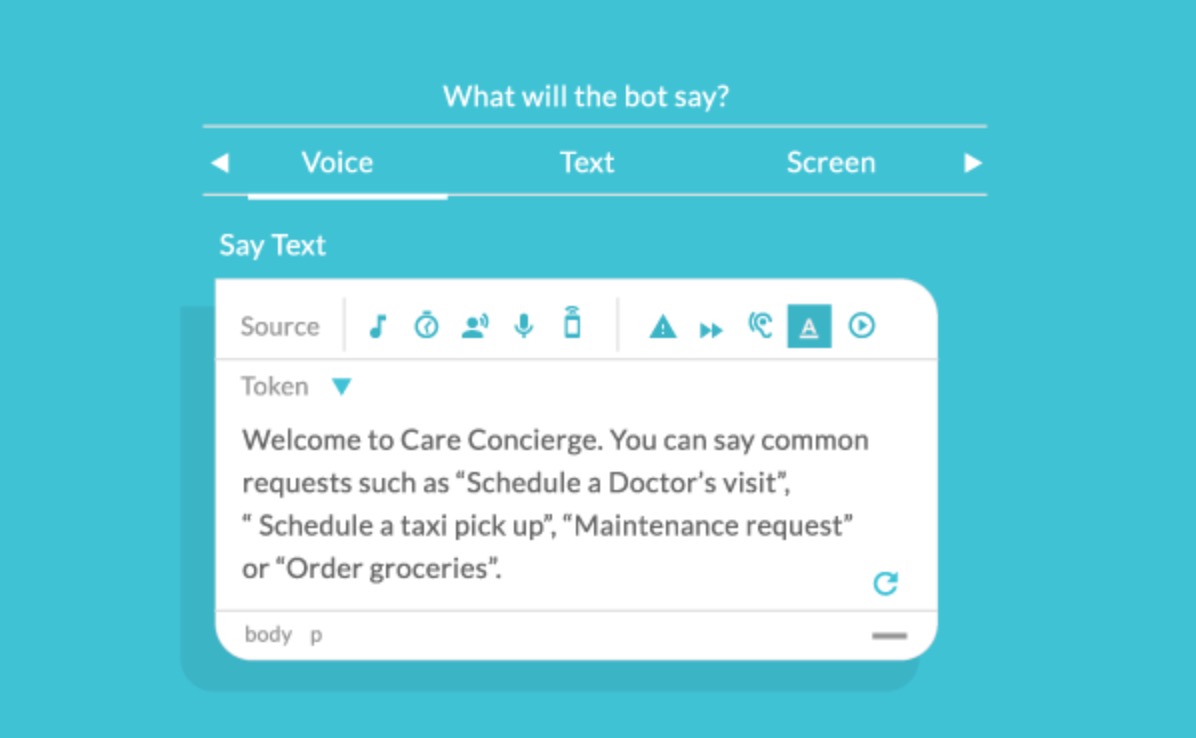

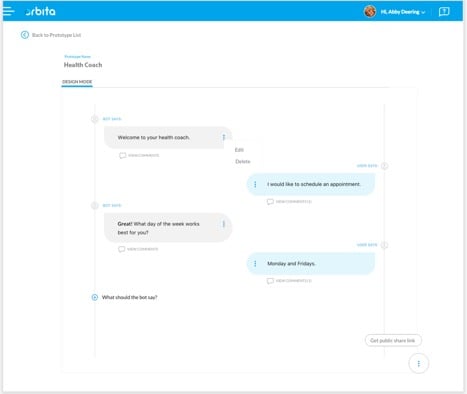

Orbita Prototype is a powerful engine that enables rapid prototyping of conversational dialogues without requiring any coding - much like creating a wireframe for a web site or a sketch for a mobile app. Consider the following image, for example.

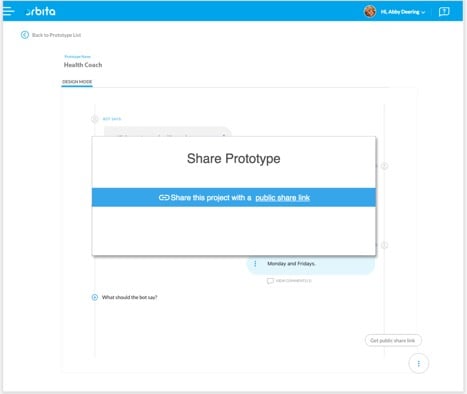

With Orbita Prototype, a UX designer can quickly create a conversational dialogue and make it available through a publicly accessible URL. The UX designer can readily share a “rough draft” of the experience.

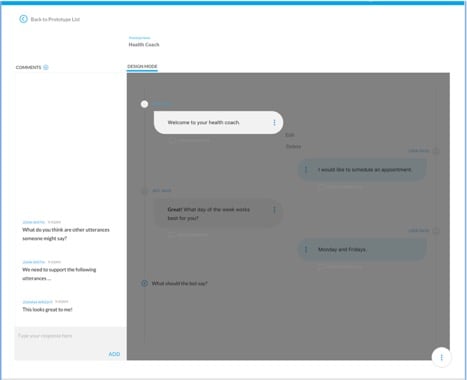

Additionally, Orbita Prototype provides functionality for testers, potential end users, and key stakeholders to “trial” an experience and provide feedback. This helps designers identify areas of friction areas within the UX, and also align priorities for future revisions and iterations.

In the absence of prototyping, product owners cannot actively participate in the dialogue creation process. At best, they review written scripts. Typically, little or no feedback is provided until the dialogue is heard or seen, which is most often during or after the development process.

Without a tool like Orbita prototype product, product owners may be challenged to test assumptions with end users. “What will a user say in response to a prompt?,” for example. “Will the bot’s response make sense?” or “Is the dialog too long?” Additionally, product owners have now ability to identify potentially problematic areas, nor do they have access to quantifiable data points to inform decision making.

Orbita Prototype is a powerful engine for iterating on a project in near real-time. It enables product owners to quickly make adjustments to conversational dialogues, test adjustments, and measure the impact of these changes. Precious time is saved.

Conclusion

A positive end-user experience is fundamental to any successful project. Whether the desired outcome of a voice project is to improve staff productivity, increase customer/patient satisfaction, and/or decrease costs, Orbita Prototype improves a developer’s ability to create positive, engaging, and effective end-user experiences in a highly efficient way.

Resources:

[1] https://techcrunch.com/2017/05/04/report-smartphone-owners-are-using-9-apps-per-day-30-per-month/

[2] http://info.localytics.com/blog/24-of-users-abandon-an-app-after-one-use

[3] https://www.recode.net/2017/1/23/14340966/voicelabs-report-alexa-google-assistant-echo-apps-discovery-problem

[4] https://medium.com/the-mission/nobody-cares-about-your-amazon-alexa-skill-ac14bd080327

[5] https://www.appcues.com/blog/app-retention-is-hard-heres-how-to-improve-it

[6] https://voicebot.ai/2017/09/20/voice-app-retention-doubled-9-months-according-voicelabs-data/

[7] https://www.dtelepathy.com/blog/design/the-ux-of-voice-the-invisible-interface